Semantic Data Models from Structured Files

This tutorial will guide you through the process of creating a Semantic Data Model from a structured file, like a CSV or Excel. This is useful for situations where your agents gets similar input files on a regular basis, and you want to train it to understand the data and answer questions about it.

When creating a Semantic Data Model from a structured file, the data from the file is NOT stored in the Semantic Data Model nor your agent permanently. Instead, whenever you upload a new file in a thread, the agent will use the Semantic Data Model to understand the data and answer questions about it. Think of this like teaching the agent to better understand the "work item" files it receives.

Example use case

Imagine your agent processes factory production data from excel files, which are received on a regular basis as input work item files. You want to train the agent to understand the data and answer questions about it, but you don't want to store the data of a single file permanently in the Semantic Data Model nor your agent - but just use it as an example for the model creation.

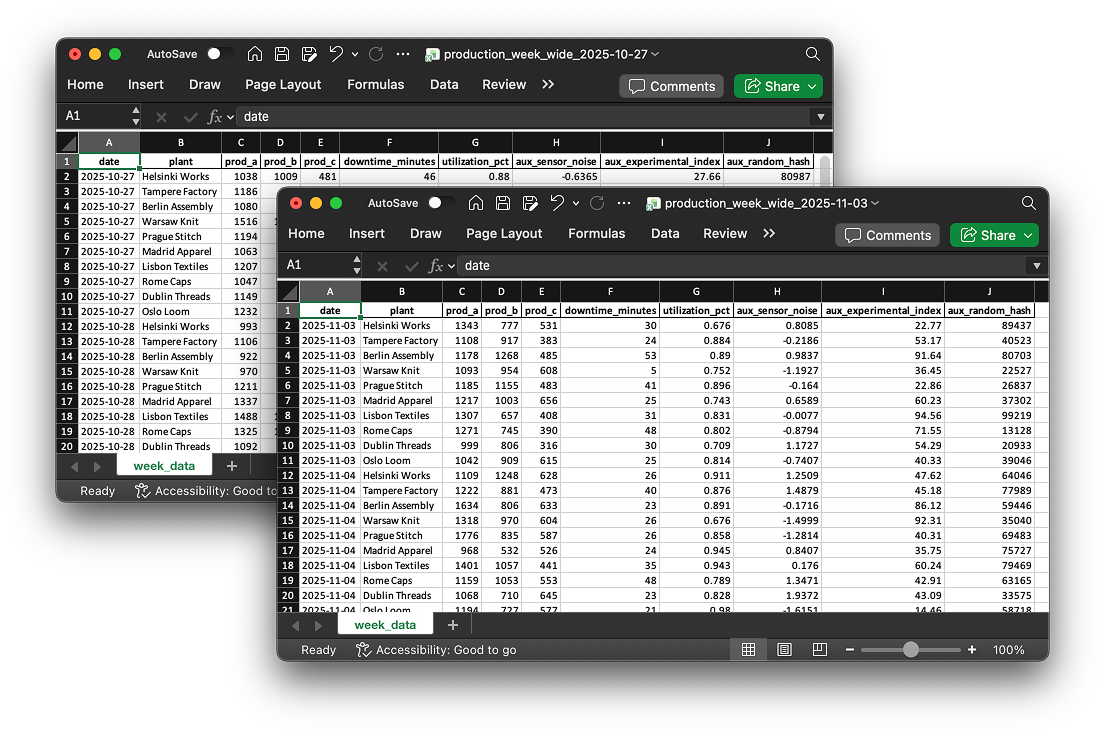

Your data looks like in the above files (download files here (opens in a new tab) to try it out yourself)!

Each file contains production data of various plants, like production volumes for prod_a, prod_b, prod_c, downtime minutes and utilization percentage. But what do these columns mean? Let's assume that:

prod_ais the number of t-shirts produced,prod_bis the number of socks produced,prod_cis the number of caps produced.

Once the model is created, every time a new files with similar structure is uploaded, the agent will use the Semantic Data Model to understand the data and answer questions about it - referring to shirts, socks and caps instead of prod_a, prod_b and prod_c.

Step-by-step guide

Follow these easy steps to create the Semantic Data Model based on a database!

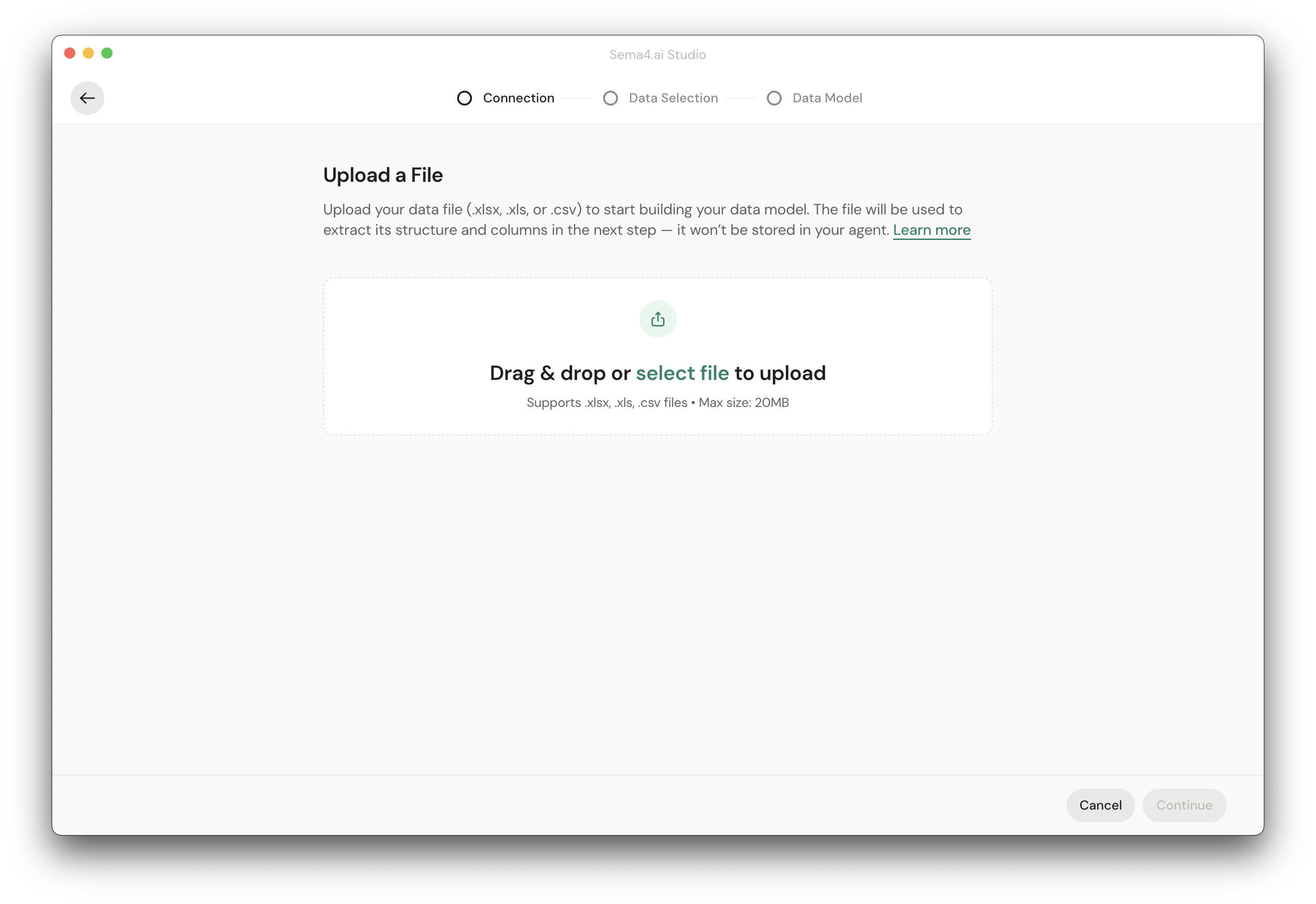

Upload sample file

First, you'll need to upload a sample file to Studio. Drag and drop your file into the upload area. If you are using the demo files from above, just pick either of the files!

Current implementation only supports one file per Semantic Data Model. This will be extended in the future to support multiple files.

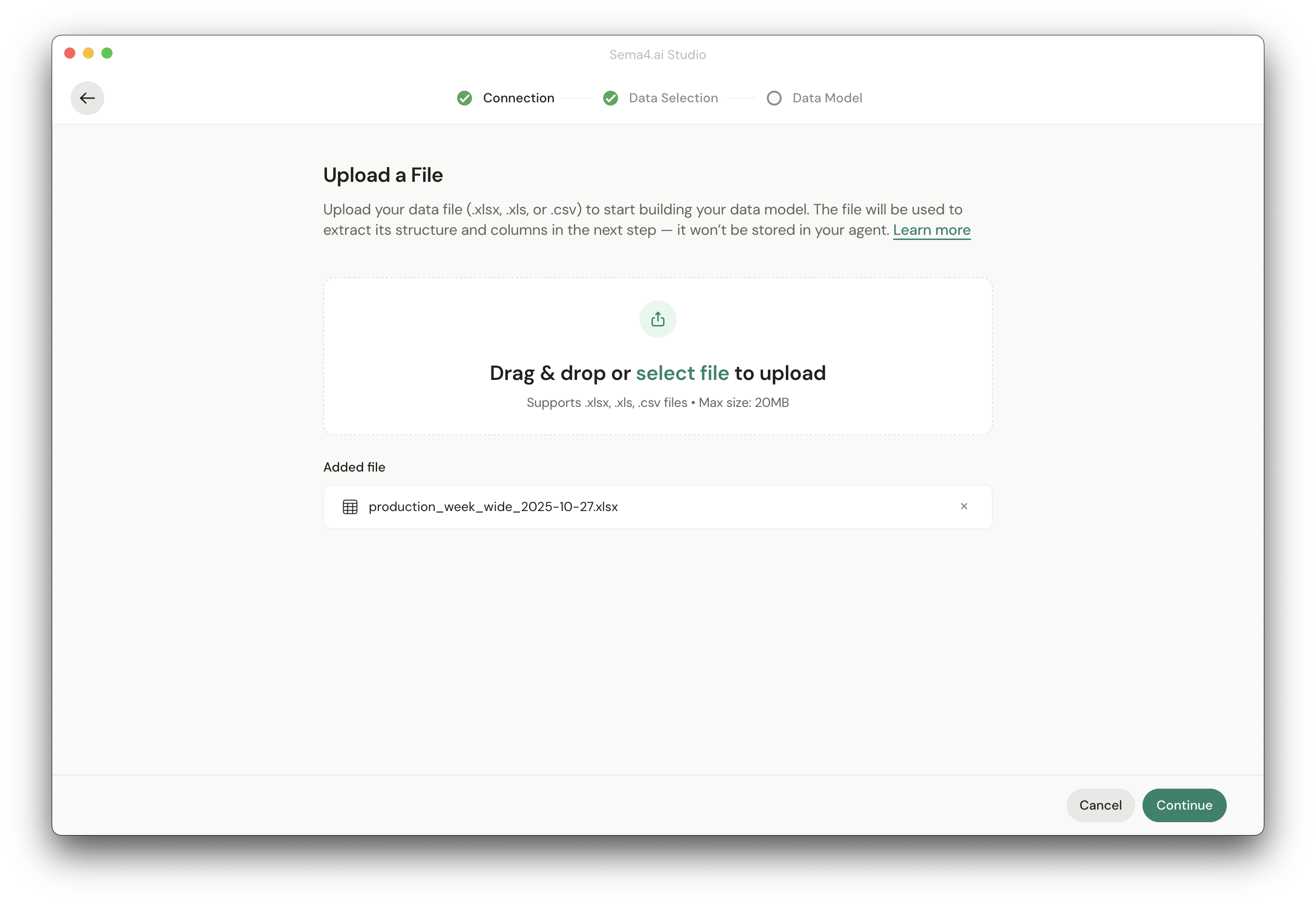

Once file is uploaded, you'll see it in the list of files. Now it's time hit Continue to proceed to the next step.

Business context

Next, you'll be asked to provide business context.

You can provide a freeform text description of the data you want to include in the Semantic Data Model. This will be used to help Studio generate the Semantic Data Model automatically. More detailed the better. Look for existing descriptions of your dataset in Word docs, Google Docs or elsewhere. Just copy and paste the text from your sources to the text area, and don't sweat about the formatting - this is only for AI's eyes!

With the example data, you can copy and paste the following text:

Domain: Manufacturing production data for apparel items (t-shirts, socks, caps).

Structure:

Each Excel file represents one week of production data. Each row is a plant on a specific date.

Columns:

date - calendar date

plant - name of the manufacturing plant

prod_a - number of t-shirts produced

prod_b - number of socks produced

prod_c - number of caps produced

downtime_minutes - minutes of production downtime

utilization_pct - percentage of capacity used

aux_sensor_noise - noise value from production machine sensors, not used for reporting

aux_experimental_index - experimental or placeholder variable

aux_random_hash - random numeric identifier

Meaning:

Granularity: one row per plant per day

Primary metrics: t_shirts (prod_a), socks (prod_b), caps (prod_c), downtime_minutes, utilization_pct

Aux fields: auxiliary data for testing or placeholder features

Use cases: production reporting, efficiency tracking, production trend analysis, downtime/utilization studies, anomaly testingSelect data

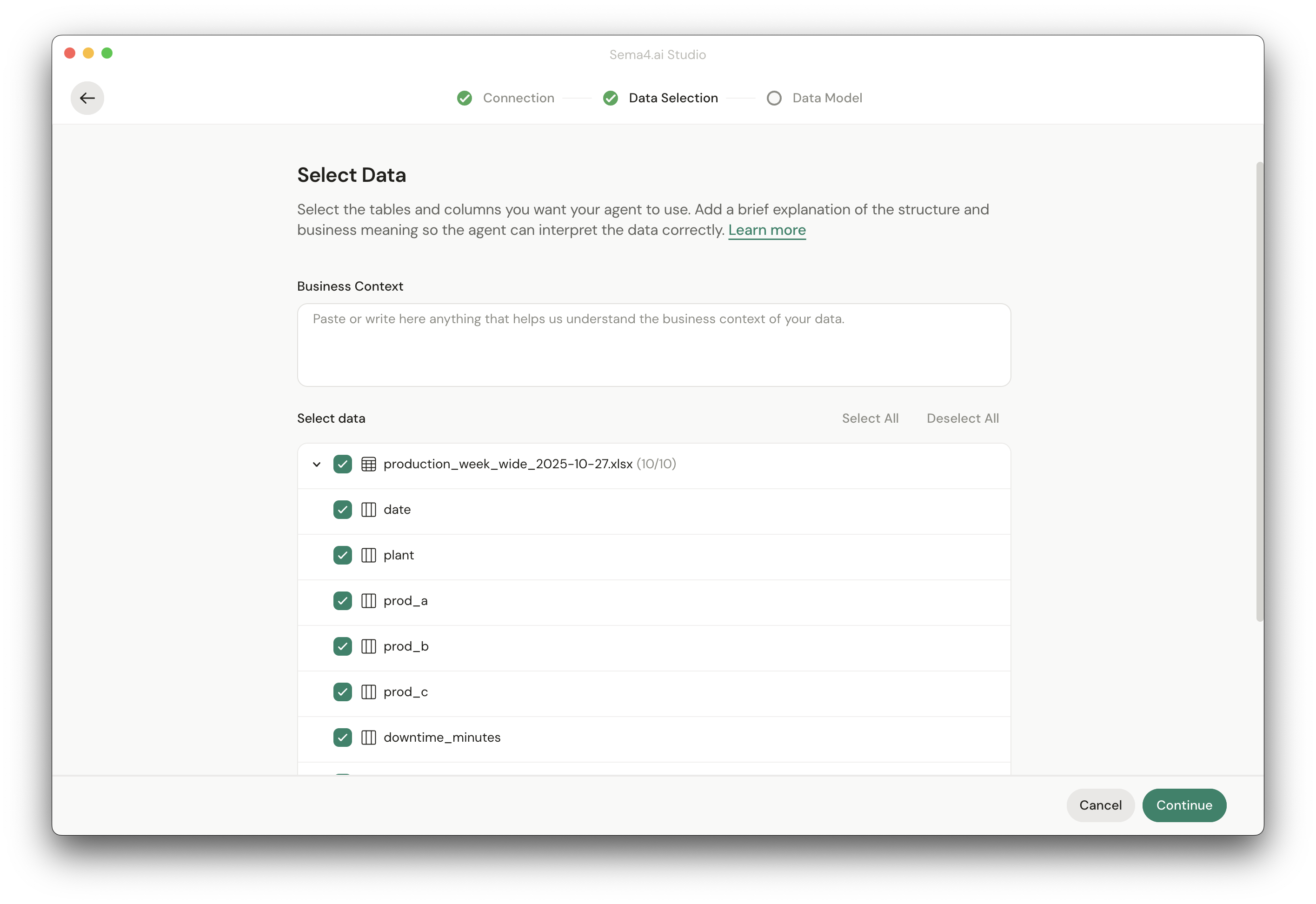

In the same view as the business context, you'll be able to select the data you want to include in the Semantic Data Model. The view shows all sheets in the file along with the columns in each sheet.

Only select the data that is REALLY needed for the model and agent. More data means more chances of going wrong!

You can select the data by clicking the checkbox next to the sheet name. You can also select individual columns by clicking the checkbox next to the column name.

With the example data, you are good by choosing all columns from the uploaded Excel file.

Once you've selected the data, you can click Continue to proceed to the next step.

Generate the Semantic Data Model

Time to watch Sai working! Our AI looks at your file sheets and columns, samples some data, and then uses the provided business context to generate the Semantic Data Model. This might take a few minutes depending on the amount of data you've selected.

Once the Semantic Data Model is generated, you have two options:

- Review - Review the generated Semantic Data Model and make improvements if needed.

- Go to Agent - Go straight to the agent and see the Semantic Data Model in action. You can always come back to make edits later!

You are seeing a "Model Completeness" score but it's grayed out, right? It's a thing that we are working on and will be available shortly. The score will tell you how well we think the model represents your data and business context, and will help you understand if the model is good enough for your use case.

In this tutorial, we'll now go straight to the agent and see the Semantic Data Model in action! Feel free to review the Editing Semantic Data Model section later.

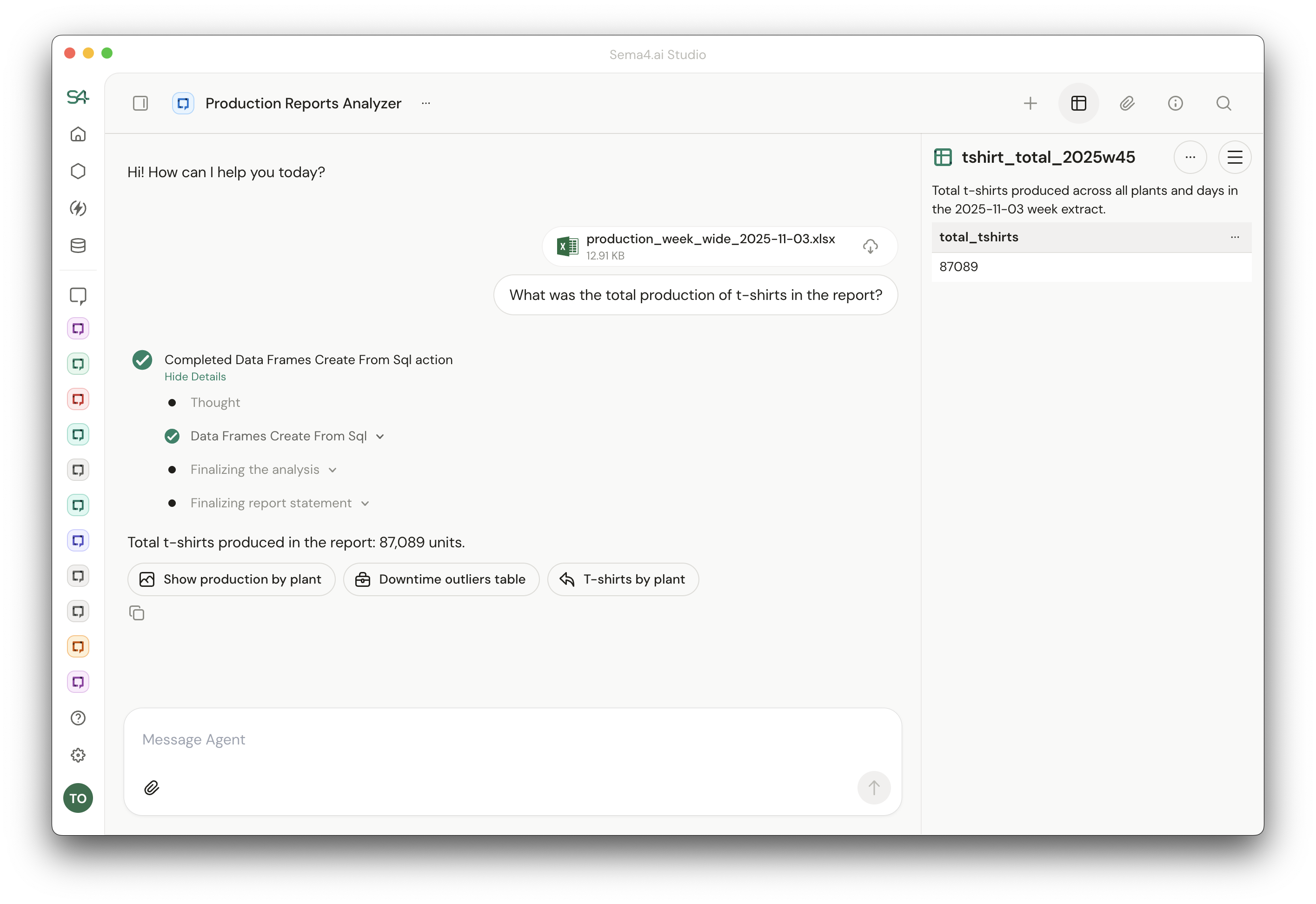

Try the model in an agent

Once you click Go to Agent, you'll be taken to the agent view. As this is a file based Semantic Data Model, you'll see that there is not yet any file in your thread that maps to your newly created Semantic Data Model. So create a new chat, and add one of the two demo files to the chat, along with a question that you want answered about the data in the file.

If you are working with the demo files, you could ask something like "What was the total production of t-shirts in the report?". The agent will use the Semantic Data Model to generate a SQL query and show it to you in tool call envelopes - and run it against the file.

The resulting dataset goes to a DataFrame, so it will not fill up the agent's context window even with large results.

In a production scenario the instructions on what to do with the file would typically be in the Runbook, allowing agent to independently process the incoming work items.