Table of Contents:

- Introduction

- What are the key takeaways about LLM agents?

- What is an LLM agent?

- How does an LLM agent work?

- What are the core components of LLM agent architecture?

- How do LLM agents differ from standard large language models?

- What are the main types of LLM agents?

- What are the benefits of LLM agents for enterprise organizations?

- What challenges do organizations face when deploying LLM agents?

- What frameworks and approaches support LLM agents?

- How are LLM agents used in production today?

- How does Sema4.ai use LLM agents for enterprise automation?

- What does the future of LLM agents look like?

- What questions do people ask about LLM agents?

An LLM agent is an AI system that uses a large language model as its core reasoning engine to plan, take actions, and complete complex tasks autonomously.

Introduction

Large language models have transformed how organizations approach artificial intelligence, but the next evolution goes far beyond text generation. Large language model agents – commonly called LLM agents – represent a fundamental shift in what AI can accomplish. Rather than simply responding to prompts, an LLM agent can reason, plan, and take multi-step actions in the real world.

So what is an LLM agent exactly? It’s an AI system that connects a large language model to external tools, memory, and data systems to complete tasks autonomously. This architecture enables capabilities that were previously impossible with standalone language models.

Enterprise organizations are increasingly deploying LLM agents to automate document-heavy workflows such as invoice processing and accounts payable operations. These agents handle complex reasoning tasks that require adapting to variable inputs, integrating with enterprise systems such as SAP and Workday, and reducing manual workload while improving operational accuracy across finance and procurement teams.

What are the key takeaways about LLM agents?

Before diving deeper, here are the essential points you need to understand about LLM agents:

- An LLM agent uses a large language model as its core reasoning engine to plan and take action across complex tasks.

- LLM agents go beyond chat by connecting to tools, memory systems, and external data sources.

- Core components of LLM agent architecture include the reasoning engine, memory, planning, and tool use.

- LLM agents differ from standard LLMs by taking multi-step actions rather than generating a single text response.

- Enterprise AI platforms such as Sema4.ai use LLM agents to automate finance, procurement, and operations workflows.

What is an LLM agent?

An LLM agent is an AI system built around a large language model that can reason, plan, and take autonomous actions to complete multi-step tasks. Unlike standard LLMs that respond to a single prompt with a single output, an LLM agent operates in a continuous loop – perceiving its environment, reasoning about what to do, taking actions using tools, and evaluating results.

This fundamental difference is what makes large language model agents so powerful for enterprise applications. While a standard language model might answer a question about an invoice, an LLM agent can actually process that invoice, extract relevant data, match it against purchase orders, identify discrepancies, and update financial systems – all without human intervention.

LLM agents are designed to:

- Break complex goals into smaller, actionable steps that can be executed sequentially

- Use tools such as APIs, search engines, databases, or code interpreters to interact with external systems

- Retain memory across steps within a task to maintain context and continuity

- Adapt dynamically based on feedback from their environment

This positions LLM agents as a foundational component of enterprise automation, enabling organizations to deploy AI that handles end-to-end workflows rather than isolated tasks.

How does an LLM agent work?

Understanding how an LLM agent works requires examining the perception-reasoning-action loop that drives its behavior. This continuous cycle is what distinguishes an agent from a simple chatbot or text generator.

When an LLM agent receives a task, it doesn’t simply generate a response and stop. Instead, it enters a loop where it reasons about the task, takes action, evaluates the result, and determines what to do next. This process continues until the task is complete or the agent determines that human escalation is required.

Here are the key steps in an LLM agent’s operation:

- Receive a task or goal from a user, orchestrator, or trigger event such as an incoming email or system notification

- Reason about the task and plan a sequence of steps using the LLM’s natural language understanding capabilities

- Select and call the appropriate tool or action such as querying a database, extracting data from a document, or calling an API

- Evaluate the result and determine whether additional steps are needed

- Repeat until the task is complete or escalate to a human when the agent encounters uncertainty or exceptions

This iterative process allows LLM agents to handle workflows that would be impossible for traditional automation tools, which rely on rigid, predefined rules rather than dynamic reasoning.

What are the core components of LLM agent architecture?

LLM agent architecture consists of several interconnected components that work together to enable autonomous task completion. Understanding these components is essential for anyone evaluating or implementing agent-based automation.

The core components of LLM agent architecture include:

- LLM reasoning engine – the core language model that interprets instructions and makes decisions

- Memory – both short-term context and long-term retrieval systems

- Planning – task decomposition and sequencing capabilities

- Tool use – integrations with external systems and APIs

Let’s examine each component in detail.

What role does the LLM play as the reasoning engine?

The large language model serves as the central reasoning engine of any LLM agent. It interprets instructions, evaluates context, and determines what action to take at each step in a workflow. This reasoning capability is what allows agents to handle ambiguous or variable inputs without relying on rigid rule sets.

Unlike traditional automation systems that require explicit programming for every scenario, the LLM reasoning engine can understand natural language instructions, interpret context, and make judgment calls when faced with novel situations. This flexibility is particularly valuable in enterprise environments where document formats, process variations, and edge cases are common.

The reasoning engine also enables what’s known as chain-of-thought processing, where the agent explicitly works through its logic step by step. This not only improves accuracy but also provides transparency into how the agent reached its conclusions – a critical requirement for enterprise deployments where auditability matters.

What is the role of memory in LLM agents?

Memory is a crucial component of LLM agent architecture that enables continuity and context retention across multi-step workflows. Without memory, an agent would lose track of what it had already accomplished and what information it had gathered.

There are two primary types of memory in LLM agents:

Short-term memory refers to the active context window used during a task. This includes the current conversation, recent actions taken, and immediate context needed to complete the current step. Short-term memory is typically maintained within the LLM’s context window and persists throughout a single task or session.

Long-term memory involves external storage systems such as vector databases that allow agents to retrieve relevant information across sessions. This enables agents to remember past interactions, access organizational knowledge bases, and maintain continuity over extended periods.

Together, these memory systems allow LLM agents to handle complex workflows that span multiple steps, documents, or even multiple sessions – capabilities that are essential for enterprise automation.

How do LLM agents plan and break down tasks?

Planning is what allows LLM agents to handle complex goals that require multiple decision points, branching logic, or sequential dependencies. Rather than attempting to accomplish everything in a single step, effective agents decompose complex goals into manageable subtasks.

This planning capability often leverages reasoning strategies such as chain-of-thought prompting, where the agent explicitly outlines its approach before taking action. By thinking through the problem systematically, the agent can identify dependencies, anticipate potential issues, and create a logical sequence of steps.

For example, when processing an invoice, an LLM agent might plan the following sequence:

- Extract key data from the invoice document

- Identify the associated purchase order number

- Retrieve the original purchase order from the ERP system

- Compare line items between the invoice and the purchase order

- Flag any discrepancies for review or proceed to approval

This structured approach to planning ensures that complex workflows are handled systematically and that each step builds appropriately on previous results.

How do LLM agents use tools and external systems?

Tool use is what transforms an LLM from a text generator into an agent capable of taking real-world actions. By calling external tools such as APIs, databases, document extractors, code interpreters, and enterprise systems, LLM agents extend their capabilities far beyond text generation.

This is a critical distinction in LLM agent architecture. While a standalone language model can only produce text, an agent equipped with tools can:

- Query databases to retrieve customer information, transaction histories, or inventory levels

- Extract data from documents using specialized document intelligence capabilities

- Call APIs to update records in enterprise systems such as SAP, Workday, or Salesforce

- Execute code to perform calculations, data transformations, or complex logic

- Send communications via email, messaging platforms, or ticketing systems

Tool use is what allows LLM agents to interact with the real world and take concrete actions on behalf of users or organizations. Without this capability, agents would be limited to providing recommendations rather than actually completing work.

How do LLM agents differ from standard large language models?

Understanding the distinction between LLM agents and standard large language models is essential for evaluating AI solutions. While both are built on similar underlying technology, their capabilities and applications differ significantly.

A standard large language model responds to a single prompt with a single output. It has no inherent ability to take actions, retain context beyond the immediate conversation, or interact with external systems. When you ask a question, you receive an answer – and that’s the end of the interaction.

An LLM agent, by contrast, operates in a continuous loop. It can take actions, use tools, and manage memory across multi-step workflows. When given a task, it reasons about how to accomplish it, takes the necessary steps, evaluates results, and continues until the work is complete.

Here’s a comparison of the key differences:

| Capability | Standard LLM | LLM agent |

| Input/Output | Single prompt in, single response out | Continuous reasoning loop |

| External Actions | None | Tool calls and real-world actions |

| Memory | Limited to conversation | Persistent memory across steps |

| Task Completion | Provides information | Completes tasks autonomously |

| Adaptability | Static response | Dynamic adaptation based on results |

This distinction has profound implications for enterprise automation. While a standard LLM might be useful for answering questions or drafting content, an LLM agent can actually execute workflows, process documents, and update systems – capabilities that deliver measurable operational impact.

What are the main types of LLM agents?

As large language model agents mature, several distinct types have emerged to address different enterprise needs. Understanding these types helps organizations select the right approach for their specific use cases.

Single-agent systems

Single-agent systems deploy one agent that is responsible for completing an entire task end to end. This agent handles all reasoning, planning, and action execution for a given workflow. Single-agent systems are simpler to implement and work well for straightforward processes with clear steps.

Multi-agent systems

Multi-agent systems deploy multiple specialized agents that collaborate to complete complex tasks. An orchestrator agent manages task routing and coordination, delegating specific subtasks to agents with relevant expertise. This approach is particularly effective for workflows that span multiple domains or require different types of specialized knowledge.

Worker agents (unattended)

Worker agents operate autonomously without human interaction, processing tasks 24/7. These agents are ideal for high-volume, repetitive workflows where the logic is well-defined and exceptions are rare. Organizations deploy worker agents to handle tasks like invoice processing, data extraction, and routine system updates.

Assisted agents (attended)

Assisted agents work alongside humans, providing recommendations or handling sub-tasks while keeping humans in the loop for decisions that require judgment. This approach is valuable for high-stakes processes where human oversight is required or for workflows where exceptions are common and require human judgment.

What are the benefits of LLM agents for enterprise organizations?

LLM agents enable enterprise organizations to automate complex, document-driven, and judgment-intensive workflows that traditional automation tools could not handle. While earlier technologies like RPA excelled at simple, rule-based tasks, they struggled with processes requiring interpretation, adaptation, or reasoning.

Here are the primary benefits that LLM agents deliver:

Reduced manual effort on high-volume tasks. LLM agents can process thousands of documents, emails, or transactions without human intervention. Tasks that previously required hours of manual work can be completed in minutes.

Improved accuracy by eliminating manual data entry errors. Human data entry is inherently error-prone, particularly for repetitive tasks. LLM agents maintain consistent accuracy regardless of volume or complexity.

Scalability across enterprise systems. LLM agents can be configured to work with existing enterprise applications without requiring extensive developer resources. This allows organizations to scale automation across departments and processes.

Auditability and transparency through structured reasoning traces. Unlike black-box AI systems, well-designed LLM agents provide clear documentation of their reasoning and actions. This transparency is essential for compliance, debugging, and continuous improvement.

Adaptability to variable inputs. Different invoice formats, document types, or request structures don’t require separate automation rules. LLM agents can interpret variations and adapt their approach accordingly.

What challenges do organizations face when deploying LLM agents?

While LLM agents offer significant potential, organizations must address several challenges to deploy them successfully in enterprise environments.

Accuracy and hallucination risks. Large language models can occasionally generate incorrect information or make errors in reasoning. For high-stakes financial or operational decisions, organizations need safeguards to catch and correct these issues.

Data security and governance requirements. Enterprise-grade deployments require that sensitive data remain within organizational boundaries. Solutions must meet strict security, privacy, and compliance requirements. Learn more about enterprise-grade security for AI agents and how Sema4.ai keeps data within enterprise infrastructure.

Integration complexity. Connecting LLM agents to existing enterprise systems – ERP platforms, databases, legacy applications – requires careful planning and technical expertise.

Human oversight and exception handling. Organizations need to define clear escalation paths for situations where agents encounter uncertainty or exceptions that require human judgment.

Developer resource requirements. Building, testing, and maintaining agents requires technical expertise, which can be a bottleneck for organizations with limited AI development resources.

What frameworks and approaches support LLM agents?

A variety of frameworks and architectural patterns support LLM agent development. Understanding these approaches helps organizations evaluate platforms and make informed technology decisions.

The ReAct pattern

The ReAct (Reason + Act) pattern is a widely recognized approach that structures how agents reason and take actions in alternating steps. In this pattern, the agent first reasons about what to do, then takes an action, observes the result, and reasons again about the next step. This structured alternation between thinking and acting improves reliability and transparency.

Tool-calling APIs and function calling

Modern large language models increasingly support tool-calling APIs and function-calling capabilities that make it easier to build agents. These capabilities allow the model to identify when a tool should be called, specify the appropriate parameters, and interpret the results – all within the natural flow of reasoning.

Natural language runbooks

Natural language runbooks represent a business-user-friendly alternative to code-based agent configuration. Rather than requiring developers to write code, natural language runbooks allow business users to define agent behavior using plain English instructions. This approach dramatically reduces the technical barrier to agent development and enables domain experts to configure automation directly.

Enterprise platforms such as Sema4.ai build on these foundational patterns to deliver production-ready agent infrastructure that organizations can deploy with confidence.

How are LLM agents used in production today?

Yes, LLM agents are absolutely being used in production today. Enterprise organizations across finance, procurement, and operations are deploying agents to automate real workflows and deliver measurable business impact.

Here are examples of LLM agents in production:

Automated invoice processing and three-way matching. LLM agents process incoming invoices, extract relevant data, match invoices against purchase orders and receiving documents, identify discrepancies, and route exceptions for human review. This automation handles high volumes with consistent accuracy.

AP help desk automation. Agents autonomously resolve supplier inquiries about payment status, missing invoices, and account discrepancies. By retrieving information from enterprise systems and responding to common questions, agents dramatically reduce response times and free human staff for complex issues.

Procurement workflow automation. From requisition to payment, LLM agents orchestrate the entire procurement process – routing approvals, validating compliance, and maintaining audit trails throughout.

Document intelligence for complex financial documents. AI-powered document intelligence enables agents to process complex, multi-format documents such as invoices, contracts, and purchase orders with near-perfect accuracy. This capability is essential for automating document-heavy workflows in finance and operations.

See how Sema4.ai is used in production today:

- Learn more about AI agent use cases

- Read about customer successes

- Listen to our webinar AI Agents in the Wild & see real demos

How does Sema4.ai use LLM agents for enterprise automation?

Sema4.ai applies LLM agent technology within its enterprise AI agent platform to help organizations automate complex, document-driven workflows. The platform is designed to enable business users to configure automation using natural language runbooks, eliminating the need for extensive developer resources.

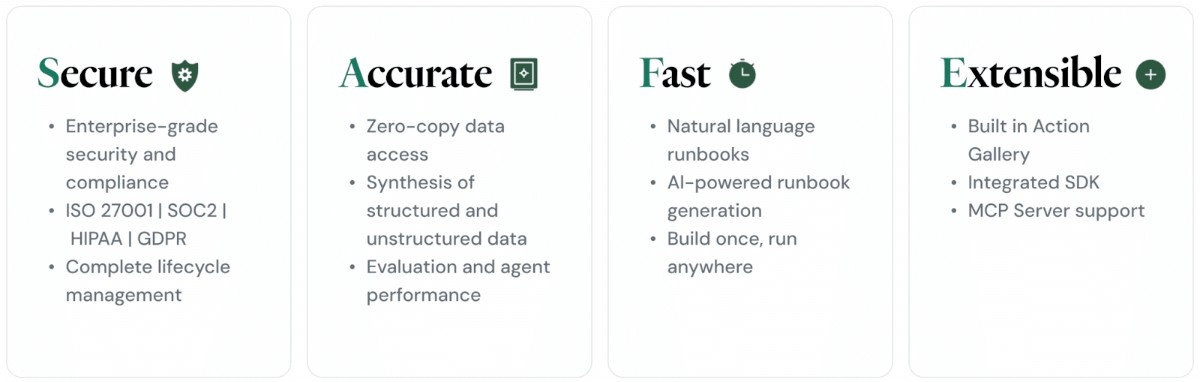

The Sema4.ai platform is built around the SAFE framework:

- Secure

We prioritize your data’s integrity and confidentiality. Our system is engineered to operate entirely within your existing security infrastructure, ensuring that all data processing and storage adhere to your established security protocols and boundaries. This approach minimizes external vulnerabilities and provides you with complete control over your sensitive information. - Accurate

At the core of our system’s intelligence are industry-leading large language models (LLMs). We leverage the power of advanced AI from providers such as OpenAI, Gemini, and Snowflake Cortex AI. This multi-faceted approach ensures that our output is highly accurate, contextually relevant, and continuously refined by the latest advancements in AI research. - Fast

We understand the importance of rapid deployment and operational efficiency. Our solution boasts one-click deployment capabilities, allowing you to integrate and activate the system swiftly and seamlessly. This quick setup translates to immediate productivity gains and a significantly reduced time-to-value. - Extensible

Our architecture is built for flexibility and future-proofing. It is designed to effortlessly connect with any enterprise application within your ecosystem. This extensibility ensures that our system can integrate with your existing workflows, data sources, and business tools, providing a unified and comprehensive solution that adapts to your evolving needs.

Learn more about Sema4.ai Agents

Key differentiators

Business user empowerment. Sema4.ai enables teams to build LLM agents using natural language runbooks, reducing developer dependency and allowing domain experts to configure automation directly. Studio enables business users to configure automation without writing code.

Document Intelligence. The platform processes complex invoices across multiple formats with near-perfect accuracy. AI-guided configuration allows business users to teach the system how documents should be understood, with automatic adaptation to handle variations.

Real-world impact. Organizations using Sema4.ai have achieved dramatic results:

- Invoice reconciliation reduced from 3 hours to approximately 2 minutes per invoice

- AP help desk response times reduced from 24–48 hours to approximately 10 minutes

- 90% autonomous resolution rate for routine inquiries

Teams can interact with agents through Work Room, a dedicated interface where business users collaborate with both conversational and worker agents to get work done.

What does the future of LLM agents look like?

The future of LLM agents in enterprise settings is shaped by several emerging trends that will expand their capabilities and accessibility.

Multi-agent collaboration and orchestration. Complex enterprise workflows will increasingly be handled by teams of specialized agents working together, with orchestration layers managing task routing and coordination.

Business user empowerment. Natural language configuration and no-code agent development will continue to lower barriers, enabling domain experts to build and refine agents without developer involvement.

Deeper enterprise system integration. LLM agents will connect more deeply with ERP, CRM, and financial platforms, enabling end-to-end automation of processes that span multiple systems.

Improved reasoning accuracy. Advances in large language model capabilities will continue to improve agent reliability, reducing hallucinations and errors in complex reasoning tasks.

Greater transparency and auditability. As agents take on more critical business functions, platforms will provide increasingly sophisticated tools for monitoring agent behavior, explaining decisions, and maintaining compliance.

Modern platforms such as Sema4.ai are already enabling many of these capabilities, allowing business users to configure automation using natural language runbooks while maintaining enterprise-grade transparency and control.

What questions do people ask about LLM agents?

What is an LLM agent?

An LLM agent is an AI system that uses a large language model as its core reasoning engine to plan, take actions, and complete tasks autonomously. Unlike standard chatbots, LLM agents connect to tools, memory systems, and external data sources to execute multi-step workflows without constant human guidance.

How does an LLM agent work?

An LLM agent operates in a continuous loop: it receives a task, reasons about how to accomplish it, selects and calls appropriate tools, evaluates the results, and repeats until the task is complete. This perception-reasoning-action cycle enables agents to handle complex workflows autonomously.

What are the core components of LLM agent architecture?

LLM agent architecture includes four core components: the LLM reasoning engine that interprets instructions and makes decisions, memory systems for context retention, planning capabilities for task decomposition, and tool-use integrations that enable real-world actions.

How do LLM agents differ from regular LLMs?

Standard LLMs respond to a single prompt with a single output and cannot take actions or retain persistent memory. LLM agents, by contrast, operate in continuous loops, use tools to interact with external systems, maintain memory across steps, and complete tasks autonomously.

What frameworks support LLM agents?

LLM agent development is supported by patterns like ReAct (Reason + Act), tool-calling APIs in modern language models, and natural language runbooks that allow business users to configure agents without code. Enterprise platforms build on these foundations for production deployments.

Are LLM agents used in production today?

Yes, LLM agents are deployed in production across enterprise finance, procurement, operations, and more. Organizations use them for invoice processing, AP help desk automation, procurement workflows, and document intelligence – achieving significant reductions in processing time and manual effort.

Back office and operational functions represent the highest-value targets for AI agent deployment. These areas share critical characteristics that make them exceptionally well-suited for intelligent automation, and a great place to start.

Back office operations are ideal for AI agent automation as they help mitigate:

- High-volume repetitive work: Back office teams process thousands of similar transactions – invoices, purchase orders, support tickets, contracts – creating significant opportunities for automation at scale.

- Multi-system complexity: Operational work typically requires accessing multiple enterprise systems to complete a single task. AI agents excel at orchestrating cross-system workflows, eliminating the manual switching and data re-entry that consumes employee time.

- Document-intensive processes: Finance, HR, legal, and supply chain operations revolve around processing unstructured documents. Modern AI agents can read, understand, and extract data from PDFs, emails, and images with near-human accuracy.

- Clear success metrics: Unlike some strategic initiatives, back office automation delivers measurable impact through reduced processing time, improved accuracy rates, and quantifiable cost savings that justify continued investment.

- Lower risk testing ground: Operational use cases allow organizations to deploy AI agents in controlled environments, validate performance, and build confidence before expanding to customer-facing or revenue-critical applications.

Organizations implementing back office AI agents report 70-90% reduction in invoice processing time, 50% faster time-to-hire in HR, and dramatic improvements in compliance audit performance.

Many organizations are exploring enterprise AI agents to automate complex workflows. Learn how the Sema4.ai Enterprise AI Agent Platform enables teams to build and deploy LLM agents using natural language runbooks with enterprise-grade security and control.