I’m going to make a bold statement: the semantic layer is the most underestimated capability in enterprise AI, and its absence is why most agent deployments fail to scale beyond pilots.

After years of building enterprise automation platforms and watching countless AI initiatives stall, I’ve become convinced that the industry has been solving the wrong problem. We’ve obsessed over making LLMs smarter, faster, and cheaper while ignoring a fundamental truth – agents are only as useful as their understanding of your data.

The data access crisis no one talks about

Here’s what actually happens in most enterprises today: a business analyst needs to reconcile invoices against payment records. Simple enough, right? Except the invoice data lives in PDFs with dozens of different formats. The payment records are in Snowflake, but you need SQL expertise to query them. And when you finally extract both datasets manually, you’re stuck joining them in Excel – prone to errors, impossible to audit, and completely unsuited for the millions of rows enterprises actually process.

This isn’t an edge case. This is how work gets done in 2026.

The result? Business users waste days on tasks that should take minutes. Data engineering teams become bottlenecks for every analysis request. And AI agents, despite all their sophisticated reasoning capabilities, sit idle because they can’t access the data they need to actually do work.

Why LLMs alone will never solve this

The AI industry’s default answer has been to throw more powerful models at the problem. “Just use GPT-5!” “Claude Opus 4 can handle this!” But here’s the uncomfortable truth: even the most advanced LLMs fundamentally misunderstand enterprise data challenges.

LLMs see data syntactically, not semantically. They can generate SQL queries, sure – but they don’t understand what your customer_id field actually means to your business, how it relates to your account_number in a different system, or why certain invoice formats require special handling. They can extract text from documents, but they can’t learn your business rules for validation or adapt to the endless variations in how vendors format invoices.

And critically, LLMs are terrible at math. When you need mathematically accurate reconciliation of financial data across millions of rows, sending everything through an LLM’s context window isn’t just expensive – it’s fundamentally unreliable. You can’t build auditable financial processes on technology that occasionally hallucinates numbers.

The three-dimensional solution enterprises actually need

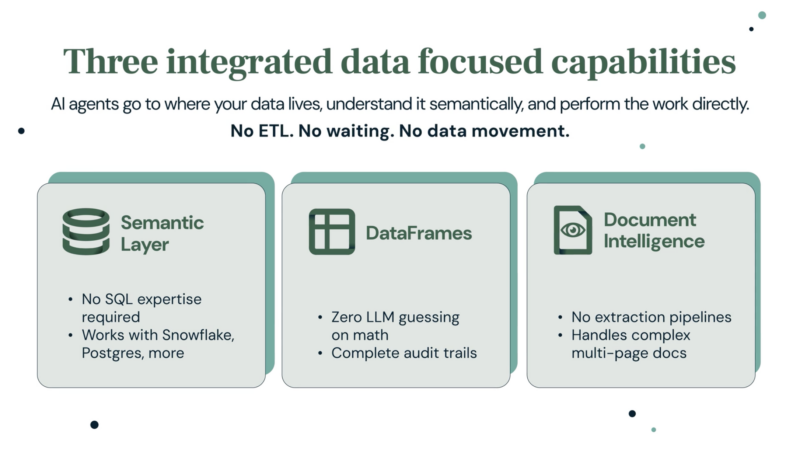

This is why we built our semantic layer as an integrated system combining three capabilities that must work together:

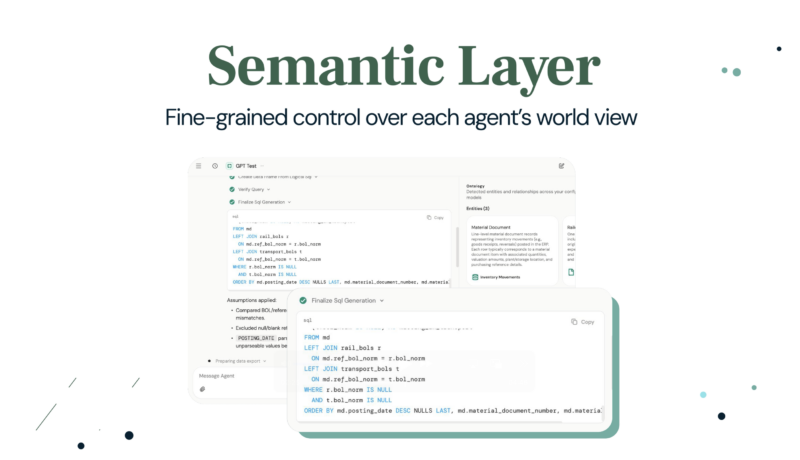

Semantic data models that teach agents what data means, not just where it lives. Our AI automatically profiles your database structures and learns business context, enabling agents to understand that “revenue” in your Snowflake warehouse relates to “total amount” on your invoices, even when the field names differ.

DataFrames that provide agents with an intelligent workspace for mathematically precise analysis. Unlike LLM-based approaches, DataFrames uses SQL for all calculations, ensuring complete accuracy and auditability when processing millions of rows across multiple data sources.

Document Intelligence that transforms unstructured data into agent-ready structured information. Business users teach the system how to understand their documents once through AI-guided configuration, then it automatically adapts to variations – handling 100+ page invoices with dense tables across any format or language.

The magic happens in how these capabilities integrate. When an agent queries your database through a semantic data model, results automatically become DataFrames for further analysis. When Document Intelligence extracts tables from a PDF, that data instantly becomes a structured DataFrame ready for joining with database queries. An agent can extract invoice line items from a complex PDF, join that with payment records from your ERP, and perform mathematically precise reconciliation analysis – all through natural conversation.

Why we built on open standards

We made a deliberate architectural choice to build our semantic data models using Snowflake’s Open Semantic Interchange (OSI) format. This wasn’t just about standards compliance – it reflects a core belief about how enterprise technology should work.

Your semantic understanding of data is too valuable to lock into a proprietary format. As the semantic layer ecosystem evolves, your models should be portable, shareable, and compatible with emerging tools and platforms. OSI provides that foundation, ensuring your investment in semantic modeling remains flexible as the landscape changes.

Real results that matter

I’m a builder, not a marketer, so I care most about what actually works in production. Our early customers are seeing results that validate this architectural approach:

A large manufacturer now reconciles gas invoices in 2 minutes instead of 3 hours, processing 350+ monthly invoices with over 90% autonomous accuracy. A financial services team reduced cash matching review from hours to minutes while increasing accuracy from 20% to over 80%. Process analysts complete complex data reconciliation across multiple sources in minutes versus weeks.

These aren’t incremental improvements. They’re order-of-magnitude transformations in how work gets done.

The bottom line for technical leaders

If you’re evaluating AI agent platforms, here’s my strong recommendation: don’t settle for solutions that only handle structured data or unstructured data. Don’t accept platforms that require your business users to know SQL. And absolutely don’t deploy agents that use LLMs for mathematical operations on business-critical data.

The semantic layer is not optional infrastructure. It’s the foundational capability that determines whether your agents can actually do useful work or remain expensive chatbots.

Enterprise AI agents will only deliver on their promise when they can understand all your data – databases, documents, spreadsheets – with true semantic comprehension and mathematical precision. That’s what we’ve built, and it’s available today.

We’re announcing general availability of these capabilities at the Gartner Data & Analytics Summit this week. If you’re attending, come find me in booth 207 or at our session, “From Data Silos to Intelligent Action: Building AI Agents that Work with Your Data” on Wednesday, Mar 11 at 2 ET in Osceola 5. I’d love to show you how semantic understanding transforms what’s possible with enterprise AI agents.

Paul Codding is co-founder and SVP of Product and Customer Experience at Sema4.ai. He has spent two decades building enterprise automation platforms and remains convinced that the best technology is the kind that disappears into how work gets done.